MN4 CTE-AMD System Architecture

MN4 CTE-AMD is a cluster based on AMD EPYC processors, with a Linux Operating System and an Infiniband interconnection network. Its main characteristic is the availability of 2 AMD MI50 GPUs per node, making it an ideal cluster for GPU accelerated applications.

It has the following configuration:

- 1 login node and 33 compute nodes, each of them:1 x AMD EPYC 7742 @ 2.250GHz (64 cores and 2 threads/core, total 128 threads per node)

- 1024GiB of main memory distributed in 16 dimms x 64GiB @ 3200MHz

- 1 x SSD 480GB as local storage

- 2 x GPU AMD Radeon Instinct MI50 with 32GB

- Single Port Mellanox Infiniband HDR100

- GPFS via two copper links 10 Gbit/s

Architecture overview

CPU EPYC 7742

- 64 cores (128 threads) with a base Clock of 2.25GHz (Max Boost Clock Up to 3.4GHz)

- Total L3 Cache 256MB

- PCI Express Version PCIe 4.0 x128

- Default TDP / TDP 225W

GPU Instinct™ MI50 (32GB)

- GPU Architecture Vega20 (Lithography TSMC 7nm FinFET)

- 3840 Stream Processors and 60 Compute Units (Peak Engine Clock 1725 MHz)

- Peak Performance:

- Half Precision (FP16) 26.5 TFLOPs

- Single Precision (FP32) 13.3 TFLOPs

- Double Precision (FP64) 6.6 TFLOPs

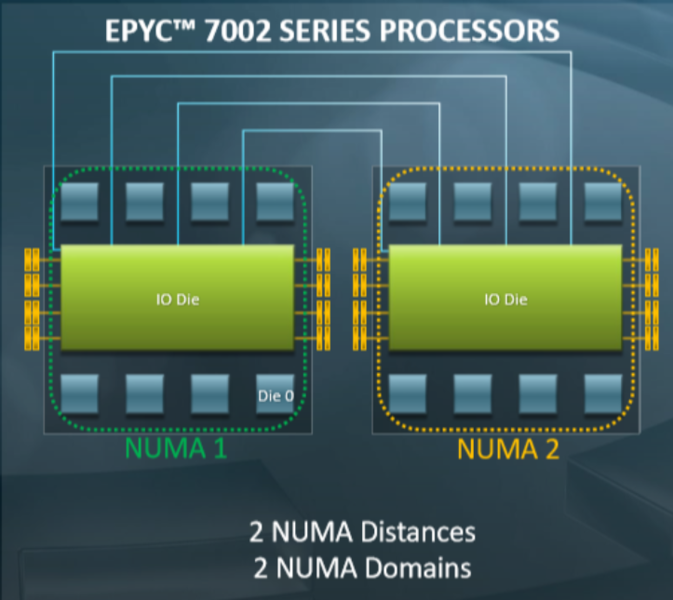

The EPYC 7002 Series processors use a Non-Uniform Memory Access (NUMA) Microarchitecture. The four logical quadrants in an AMD EPYC 7002 Series processor allow the processor to be partitioned into different NUMA domains. These domains are designated as NUMA per socket (NPS).

- NPS1: The processor is a single NUMA domain, i.e. all the cores on the processor, all memory connected to it and all PCIe devices connected to the processor are in one NUMA domain. Memory is interleaved across the eight memory channels.

- NPS2: The processor is partitioned into two NUMA domains. Half the cores and half the memory channels connected to the processor are grouped together into one NUMA domain. Memory is interleaved across the four memory channels in each NUMA domain

- NPS4: The processor is partitioned into four NUMA domains. Each logical quadrant of the processor is a NUMA domain. Memory is interleaved across the two memory channels in each quadrant. PCIe devices will be local to one of four NUMA domains on the processor depending on the quadrant of the IO die that has the PCIe root for that device.

Software Stack

AOCC

AMD Optimizing C/C++ Compiler (AOCC) is a highly optimized C, C++ and Fortran compiler for x86 targets especially for Zen based AMD processors. It supports Flang as the default Fortran front-end compiler. On MN4 CTE-AMD can be used doing:

module load aocc/2.2.0

And you can build a C or Fortran code doing:

$ clang [command line flags] xyz.c -o xyz

$ flang [command line flags] hello.f90 -o hello

AOCL

AMD Optimizing CPU Libraries(AOCL) are a set of numerical libraries optimized for AMD EPYCTM processor family.

AOCL comprise of eight packages:

- BLIS (BLAS Library) – BLIS is a portable open-source software framework for instantiating highperformance Basic Linear Algebra Subprograms (BLAS) functionality.

- libFLAME (LAPACK) - libFLAME is a portable library for dense matrix computations, providing much of the functionality present in Linear Algebra Package (LAPACK).

- FFTW – FFTW (Fast Fourier Transform in the West) is a comprehensive collection of fast C routines for computing the Discrete Fourier Transform (DFT) and various special cases thereof.

- LibM (AMD Core Math Library) - AMD LibM is a software library containing a collection of basic math functions optimized for x86-64 processor-based machines.

- ScaLAPACK - ScaLAPACK is a library of high-performance linear algebra routines for parallel distributed memory machines. It depends on external libraries including BLAS and LAPACK for Linear Algebra computations.

- AMD Random Number Generator Library - AMD Random Number Generator Library is a pseudorandom number generator library

- AMD Secure RNG - The AMD Secure Random Number Generator (RNG) is a library that provides APIs to access the cryptographically secure random numbers generated by AMD’s hardware random number generator implementation.

- AOCL-Sparse - Library that contains basic linear algebra subroutines for sparse matrices and vectors optimized for AMD EPYC family of processors

On MN4 CTE-AMD you can use it doing:

module load aocl/2.2

ROCm

AMD ROCm is the first open-source software development platform for HPC/Hyperscale-class GPU computing. AMD ROCm brings the UNIX philosophy of choice, minimalism and modular software development to GPU computing.

module load rocm

HIP

HIP (Heterogeneous-Computing Interface for Portability) is a C++ dialect designed to ease conversion of Cuda applications to portable C++ code. It provides a C-style API and a C++ kernel language. The HIPify tool automates much of the conversion work by performing a source-to-source transformation from Cuda to HIP. HIP code can run on AMD hardware (through the HCC compiler) or Nvidia hardware (through the NVCC compiler) with no performance loss compared with the original Cuda code.

An example of conversion from CUDA to HIP on MN4 CTE-AMD:

$ module load rocm

$ hipconvertinplace-perl.sh cuda.c

It generates a new file named "cuda.c.prehip" and the "cuda.c" is converted to HIP.

Intel Compilers and MKL

The Intel Compilers and the Intel Math Kernel Librarires (MKL) are developed and targeted for the Intel hardware, but they can be used on AMD architecture. They can be used on MN4 CTE-AMD loading the following modules:

module load intel/2018.4 mkl/2018.4

The recommended optimization flag for MN4 CTE-AMD is “-xAVX”. Despite EPYC processor fully supports AVX2, it might fail in some cases using “xCORE-AVX2”. The only sure way is testing it by trial and error.

The following flags allows to generate an optimized version that works on MareNostrum4, MN4 CTE-KNL and MN4 CTE-AMD:

-mavx2 -axCORE-AVX512,MIC-AVX512

Benchmark performance

HPL (Only CPU)

We have used the binary included on the MKL installation, modified to be able to run in on non-Intel architectures.

- Run:

| Parameter | Value |

|---|---|

| N |

122144

|

| NB | 122144 |

| P | 1 |

|

Q

|

2

|

The performance obtained is 1.8162 Tflops.

HPCG

- Compilation:

module load intel/2018.4 impi/2018.4 mkl/2018.4

flags: -march=core-avx2 -DHPCG_NO_OPENMP -O3

- Run:

64 MPI per node x 1 threads per MPI

n = 106

- Results:

22.0795 GFLOP/s

STREAM

- Compilation:

module load intel/2018.4 and OpenMPI/4.0.5

mpicc -O3 -qopenmp -ffreestanding -qopt-streaming-stores always -restrict -fma -xHost -fomit-frame-pointer stream_mpi.c -o stream_mpi -DSTREAM_ARRAY_SIZE=800000000 -DNTIMES=20

- Run:

Node on NPS4 mode. The highest bandwidth was obtained with 4 MPI processes and with 2 OpenMP threads per MPI process i.e. a configuration of 4 MPI x 2 OpenMP.

export OMP_SCHEDULE=STATIC

export OMP_DISPLAY_ENV=true

export OMP_NUM_THREADS=2

export OMP_PROC_BIND=spread

export OMP_PLACES=cores

export OMP_WAIT_POLICY=activ

mpirun --report-bindings --bind-to numa --rank-by core --map-by numa ./stream_mpi

- Results:

| Mode | Results (MB/s) |

|---|---|

|

Copy

|

167322.7

|

|

Scale

|

167964.5

|

|

Add

|

169308.2

|

|

Triad

|

168652.2

|